> NEURAL_AESTHETICS // LATENT_INIT

Process: Deconstructing the mathematical ontology of generative diffusion.

The Death of the Pixel

To understand generative artificial intelligence, we must discard our fundamental assumption about how digital images are made. A human artist begins with a blank canvas and adds pigment. A traditional 3D rendering engine computes light bouncing off polygons. Generative AI does neither.

Models like Midjourney, Stable Diffusion, and DALL-E do not "paint" pixels, nor do they stitch together fragments of existing photographs. Instead, they perform an act of high-dimensional sculpture. They begin with pure, unadulterated chaos—mathematical static—and systematically remove the noise until an image remains.

This process occurs within a mathematical construct known as the Latent Space. It is a terrain invisible to the human eye, governed by non-Euclidean geometry, where concepts, colors, and textures exist as numerical coordinates. The prompter is not a painter; they are a cartographer navigating a universe of compressed meaning.

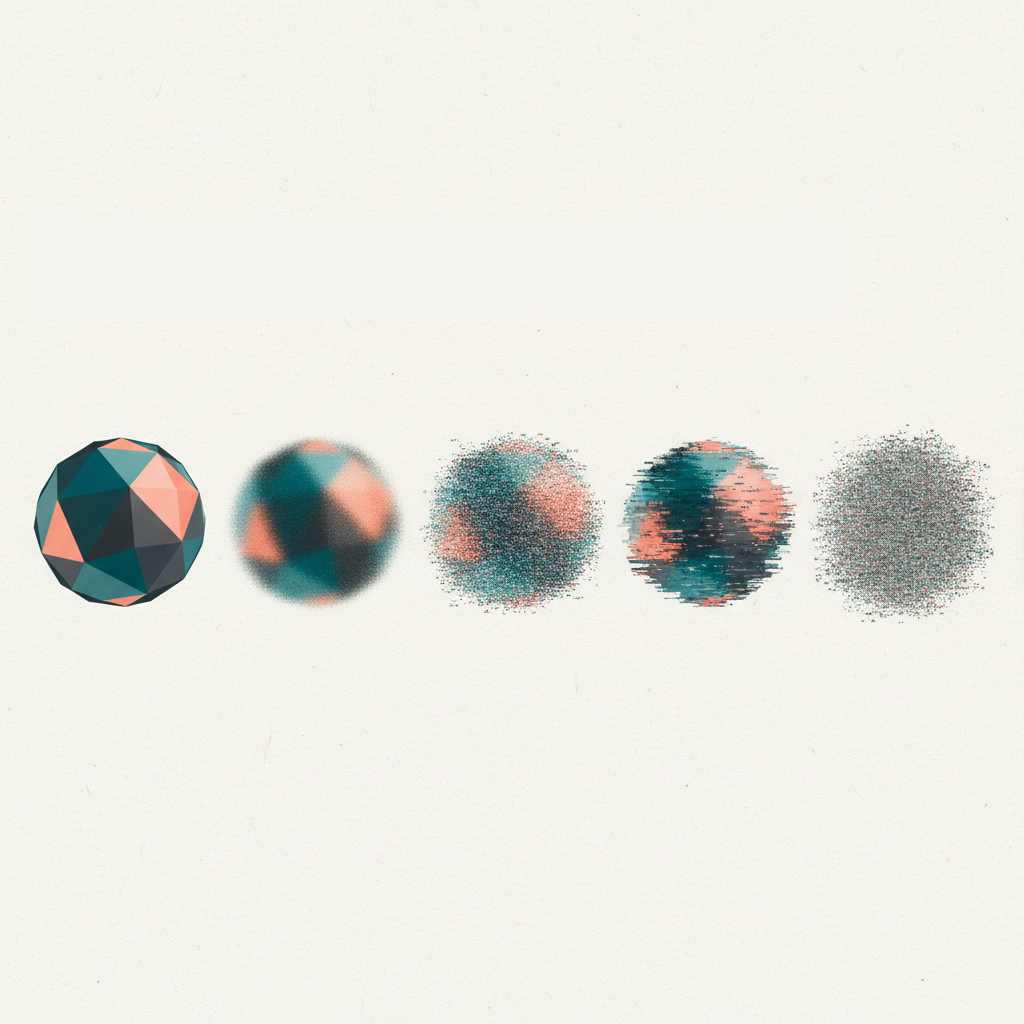

Forward Diffusion: Destroying the Image

To teach a machine how to create, researchers first had to teach it how to destroy. The training phase of a diffusion model relies on a process called Forward Diffusion.

An original, clear image is taken from a dataset. Over hundreds of iterative steps (often around 1,000), a specific mathematical formula adds a microscopic layer of Gaussian noise. The image degrades, step by step, until it is indistinguishable from the static on an untuned television screen.

Why destroy the image? Because the neural network (specifically, a U-Net architecture) is trained to observe this destruction and learn how to reverse it.

[ REVERSE_DIFFUSION_ENGINE ]

Inference phase: The U-Net predicts and subtracts noise iteratively to reveal the latent structure. Drag the slider to simulate the denoising steps.

/* U-Net Denoising loop */

for t in reversed(range(1000)):

noise_pred = unet(x_t, t, prompt_embeds)

x_t = remove_noise(x_t, noise_pred)

What is a Latent Space?

To compute images efficiently, the model does not operate in "Pixel Space" (where an image is millions of individual RGB values). Instead, an encoder compresses the image into a "Latent Space."

Imagine a vast, multi-dimensional map. On a 2D map, coordinates are (X, Y). In the latent space of Stable Diffusion, coordinates might have 512 or more dimensions. In this space, distance equals semantic similarity. A picture of a "cat" is mathematically located near a picture of a "tiger," but far away from a picture of a "toaster."

When you type a text prompt, a separate model (like OpenAI's CLIP) translates your words into numerical embeddings. These embeddings act as GPS coordinates, instructing the U-Net on exactly where to navigate within the latent manifold to find the geometric structure you requested.

[ SEMANTIC_VECTOR_ROUTING ]

Adjust the semantic weights to navigate the 2D projection of the latent manifold. Observe the generation of spatial coordinates (Tensors).

Hallucination and the Edge of the Manifold

The true artistic power of diffusion models lies not in their ability to replicate what exists, but in their capacity to interpolate the void. The "manifold" is the region of the latent space densely populated with recognized concepts (e.g., human faces, cars, trees).

When an artist crafts a paradoxical prompt—such as "a brutalist concrete spaceship made of translucent jellyfish tissue"—they force the algorithm into the empty, unmapped space between known coordinates. The AI is compelled to hallucinate, synthesizing a visual bridge between mutually exclusive concepts. This is the birthplace of the new digital surrealism.

Interpolation

Smoothly blending two distinct points in the latent space to create seamless transitional forms.

Extrapolation

Pushing the mathematical bounds past the training data, often resulting in eerie, glitch-like, or profound abstractions.

Conclusion: The New Cartographers

By recognizing the Latent Space as the actual medium, we understand that prompt engineering is a misnomer. We are not programming; we are exploring. The AI artist acts as an ontological explorer, shining a flashlight into the dark, N-dimensional architecture of collective human imagery.

The death of the pixel implies the birth of the concept. As diffusion models evolve, the technical mastery of the creator will not be judged by the steady hand that holds the brush, but by the intellectual audacity required to chart the uncharted territories of the machine's mind.

>> Bibliographic_References.log

- [01] Rombach, R., et al. (2022). High-Resolution Image Synthesis with Latent Diffusion Models (Stable Diffusion). CVPR.

- [02] Radford, A., et al. (2021). Learning Transferable Visual Models From Natural Language Supervision (CLIP). OpenAI.

- [03] Ho, J., Jain, A., & Abbeel, P. (2020). Denoising Diffusion Probabilistic Models. NeurIPS.